3DAIStudio vs Seedance 2.0

Side-by-side comparison to help you choose the right AI tool.

3DAIStudio

Join over a million creators using 3DAIStudio to instantly generate high-quality 3D models from just a text prompt or an image.

Last updated: April 4, 2026

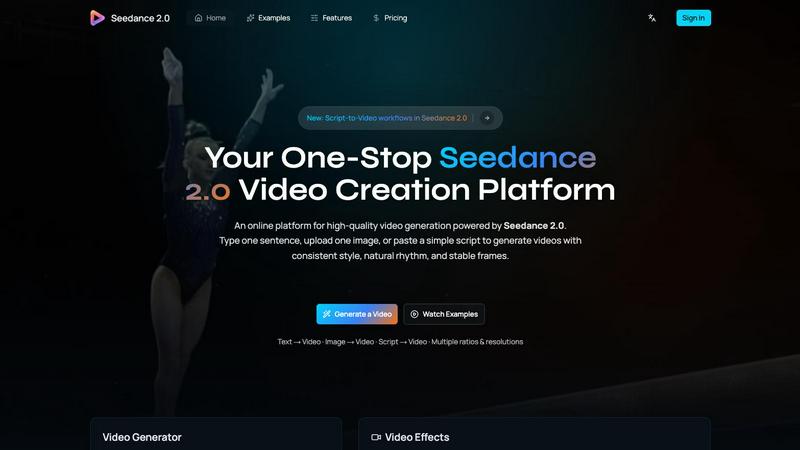

Seedance 2.0

Generate high-quality videos from text or images. Consistent style, natural motion, and stable frames guaranteed.

Visual Comparison

3DAIStudio

Seedance 2.0

Overview

About 3DAIStudio

3DAIStudio is the revolutionary AI-powered platform that transforms simple text prompts or 2D images into high-quality, production-ready 3D models in seconds. It's the ultimate toolkit for democratizing 3D content creation, making what was once a complex, days-long process accessible to anyone with an idea. Whether you're a seasoned game developer, a film production artist, a product designer, or a complete beginner, 3DAIStudio removes the technical barriers. Simply describe your vision or upload a reference image, and watch as the AI generates a fully textured, optimized 3D asset. With over 1,000,000 creators already using it and tens of thousands of assets generated daily, it's the fastest-growing solution for accelerating workflows. The platform goes beyond basic generation, offering a full suite of tools for texturing, mesh optimization (Quad-Remesh), AI image generation, and more, all designed to integrate seamlessly into professional pipelines for Unity, Unreal Engine, and other major platforms. This is the future of 3D asset creation: instant, magical, and powered by AI.

About Seedance 2.0

Seedance 2.0 is the latest AI video generation model developed by ByteDance's Seed research team. Building on the original Seedance, it delivers major improvements in motion coherence, physical realism, and multimodal generation capability.

Key Features

• Text-to-Video: Turn a single sentence into a complete cinematic scene with coherent motion

• Image-to-Video: Use reference images to guide composition, identity, and visual style

• Integrated Audio Generation: The Pro version generates synchronized video and audio in a single pass, including sound effects, background music, speech synthesis, and multilingual lip-sync

Technical Architecture

Seedance 2.0 uses a novel diffusion transformer architecture designed for temporal consistency between video frames. Unlike models that treat each frame independently, Seedance 2.0's temporal attention mechanism:

• Reuses motion cues across frames

• Preserves character identity and proportions

• Maintains consistent lighting and geometry

• Produces fewer flickers and smoother transitions

Physics-Aware Motion

Seedance 2.0 excels at understanding physical dynamics:

• Cloth fluttering in the wind

• Water splash physics

• Realistic flames and smoke

• Complex particle effects