diffray vs OpenMark AI

Side-by-side comparison to help you choose the right AI tool.

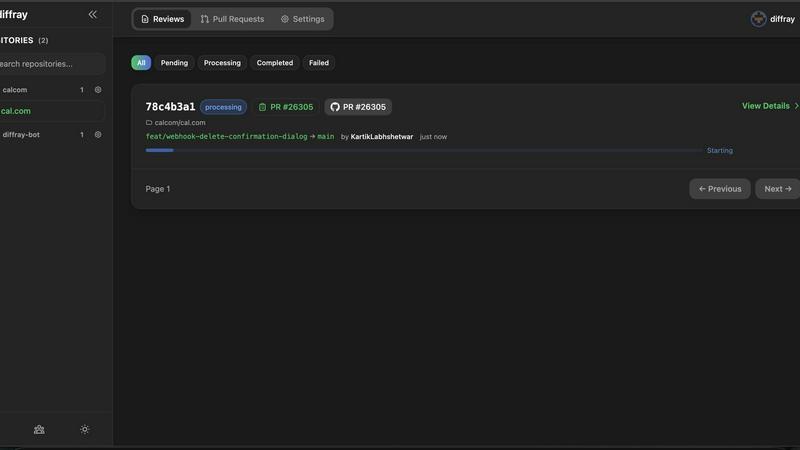

diffray

Diffray's AI code reviews catch real bugs with 87% fewer false positives, ensuring cleaner, more reliable code.

Last updated: February 28, 2026

Stop guessing which AI model to use; benchmark 100+ models on your actual task for cost, speed, and quality in minutes, no API keys needed.

Last updated: March 26, 2026

Visual Comparison

diffray

OpenMark AI

Feature Comparison

diffray

Multi-Agent Architecture

diffray's unique multi-agent architecture includes over 30 specialized agents that each focus on different areas of code quality. This targeted approach ensures that developers receive comprehensive insights tailored to their code's specific needs, enhancing the overall review process.

Reduced False Positives

By utilizing specialized agents, diffray achieves an impressive 87% reduction in false positives. This means developers can trust the feedback they receive, allowing them to focus their efforts on addressing real issues rather than sifting through irrelevant alerts.

Speedy Review Times

With diffray, the average pull request review time is slashed from 45 minutes to just 12 minutes weekly. This significant time-saving allows development teams to be more productive and respond to changes faster, ultimately accelerating the software delivery lifecycle.

Seamless Integration

diffray integrates effortlessly with leading platforms like GitHub, GitLab, and Bitbucket. This compatibility ensures that teams can incorporate diffray into their existing workflows with minimal disruption, enhancing the overall efficiency of the code review process.

OpenMark AI

Plain Language Task Benchmarking

Ditch complex configurations and scripting. Simply describe the task you want to test in natural language. OpenMark AI intelligently configures the benchmark, allowing you to run identical prompts across dozens of models instantly. This human-centric approach means you can validate real-world use cases—from email classification to code generation—without writing a single line of code, making advanced testing accessible to entire product teams.

Real API Cost & Performance Comparison

Go beyond theoretical token prices. OpenMark AI makes real, live API calls to each model provider and presents you with a detailed breakdown of the actual cost per request, latency, and scored output quality for every single test. This side-by-side comparison reveals the true trade-offs, helping you find the optimal balance between performance and budget, ensuring you never overpay for capability you don't need.

Stability & Variance Analysis

A single test run is just luck. OpenMark AI runs your prompts multiple times to measure consistency and output stability. See which models deliver reliable, high-quality results every time and which ones produce erratic, unpredictable outputs. This critical feature exposes variance, giving you the confidence that the model you choose will perform consistently in production, not just in a one-off demo.

Hosted Catalog with No API Key Hassle

Access a massive, constantly updated catalog of 100+ leading models without the headache of signing up for and configuring individual API keys from OpenAI, Anthropic, Google, and others. Simply use OpenMark's credit system to run benchmarks. This centralized access dramatically speeds up the evaluation process, letting you focus on analysis and decision-making instead of administrative setup.

Use Cases

diffray

Enhanced Code Quality for Teams

Development teams looking to improve their code quality can leverage diffray's specialized feedback to catch bugs, security vulnerabilities, and performance issues early in the development cycle, leading to more robust software.

Agile Development Environments

In fast-paced agile environments, diffray helps teams streamline their pull request reviews. By providing quick and accurate feedback, developers can iterate rapidly and maintain momentum without compromising on code quality.

Continuous Integration and Deployment

For organizations practicing continuous integration and deployment, diffray's real-time code analysis ensures that code quality remains high, preventing problematic code from being merged into the main branch and reducing the risk of deployment failures.

Educational Tool for New Developers

New developers can use diffray as an educational tool to learn best coding practices. The clear, actionable feedback helps them understand code quality metrics and improve their skills over time, fostering growth and development.

OpenMark AI

Pre-Deployment Model Selection

You're about to ship a new AI-powered feature. Instead of guessing between GPT-4, Claude 3, or Gemini, use OpenMark AI to test all contenders on your exact task. Compare real costs, accuracy, and speed in one dashboard to make a data-driven decision that aligns with your technical requirements and budget, ensuring you launch with the best-fit model from day one.

Cost Optimization for Scaling Applications

Your application is live, but API costs are creeping up. Use OpenMark AI to benchmark newer, more cost-efficient models against your current provider. Discover if a smaller, faster model can deliver comparable quality for a fraction of the price, or identify where you can downgrade model tiers without sacrificing user experience, directly boosting your margins.

Validating Model Consistency for Critical Tasks

For tasks where reliability is non-negotiable—like legal document analysis, medical data extraction, or financial summarization—you need consistent outputs. OpenMark AI's repeat-run analysis shows you the variance. Identify which models are stable workhorses and which are unpredictable, preventing costly errors and ensuring trust in your automated workflows.

Prototyping & Research for AI Products

Exploring a new AI concept? Rapidly prototype by testing a wide range of models on your novel task or prompt chain. OpenMark AI lets you quickly see which model families excel at specific capabilities like reasoning, creativity, or instruction-following, accelerating your R&D phase and providing concrete data to guide your development roadmap.

Overview

About diffray

diffray is an innovative AI code review tool that is set to transform how developers approach pull requests. Unlike conventional code review solutions that often utilize a one-size-fits-all model, diffray leverages a groundbreaking multi-agent architecture featuring over 30 specialized agents. Each agent is dedicated to scrutinizing specific aspects of code quality, including security, performance, bugs, and SEO. This focused methodology leads to a staggering 87% reduction in false positives, making it easier to pinpoint genuine issues. Developers can significantly cut down their pull request review times from an average of 45 minutes to just 12 minutes weekly, enabling teams to be more agile and efficient. Perfect for organizations that place a high value on code quality, diffray integrates effortlessly with popular platforms like GitHub, GitLab, and Bitbucket. With its clear and actionable feedback, along with a contextual understanding of your unique codebase, diffray empowers developers to concentrate on what matters most: delivering high-quality software that meets user needs and expectations.

About OpenMark AI

Stop playing roulette with your AI model choices. OpenMark AI is the definitive, no-code platform that lets you benchmark 100+ large language models (LLMs) on your actual tasks before you commit to a single API. Forget datasheet promises and marketing hype. Describe what you need in plain English—whether it's complex data extraction, creative writing, or agentic reasoning—and run the same prompt against a massive catalog of models from OpenAI, Anthropic, Google, and more in one seamless session. You get side-by-side results comparing real API costs, latency, scored output quality, and critical stability metrics across repeat runs. This means you see the variance and consistency, not just a single lucky output. Built for pragmatic developers and product teams, OpenMark AI cuts through the noise with hosted benchmarking credits, eliminating the nightmare of managing a dozen separate API keys. It’s the essential pre-deployment tool for anyone who cares about cost efficiency (quality you get for the price you pay) and shipping reliable AI features with confidence. Join thousands of developers worldwide who have moved from guessing to knowing.

Frequently Asked Questions

diffray FAQ

How does diffray reduce false positives?

diffray minimizes false positives by using a multi-agent architecture where each agent is specialized in different aspects of code quality. This targeted approach allows for more accurate assessments and helps developers focus on real issues.

Can diffray integrate with my existing tools?

Yes, diffray seamlessly integrates with popular platforms like GitHub, GitLab, and Bitbucket, allowing teams to incorporate it into their existing workflows without disruption.

What kind of feedback can I expect from diffray?

Users can expect clear, actionable feedback that highlights specific areas of concern, such as security vulnerabilities, performance bottlenecks, and potential bugs, enabling developers to make informed decisions on code improvements.

Is diffray suitable for small teams or just large organizations?

diffray is designed to be beneficial for teams of all sizes. Whether you are part of a small startup or a large organization, diffray's features can help improve code quality and efficiency across the board.

OpenMark AI FAQ

How is OpenMark AI different from other LLM benchmarks?

Most benchmarks test models on generic, academic datasets. OpenMark AI is built for your specific, real-world tasks. We run live API calls, giving you actual cost and latency data alongside quality scores for your exact use case. We also test stability across multiple runs, showing variance—something static leaderboards completely miss.

Do I need my own API keys to use OpenMark AI?

No! That's a key benefit. OpenMark AI operates on a credit system. You purchase credits and can run benchmarks against our entire hosted catalog of models without ever needing to supply or manage separate API keys from OpenAI, Anthropic, or Google. It's a unified, hassle-free testing platform.

What kind of tasks can I benchmark?

Virtually anything! Developers use it for classification, translation, data extraction, RAG system evaluation, agent routing logic, research assistance, Q&A, image analysis prompts, and creative writing. If you can describe it in plain language, you can benchmark it. The platform is designed for flexible, real-world application testing.

How does the scoring and quality assessment work?

OpenMark AI uses a combination of automated evaluation metrics tailored to your task type (like accuracy, relevance, or faithfulness) and, where configured, can incorporate human-like judgment criteria. The system scores each model's output consistently across all runs, providing a clear, comparable quality metric alongside the hard cost and speed data.

Alternatives

diffray Alternatives

Diffray is an innovative AI code review tool that sets a new standard in the development landscape. By employing a unique multi-agent architecture with over 30 specialized agents, it provides targeted feedback that enhances code quality while significantly reducing false positives. Developers often find themselves seeking alternatives as they navigate various factors such as pricing, feature sets, integration capabilities, and specific platform needs. As teams evolve, their requirements may shift, prompting them to explore options that better fit their workflows or budget constraints. When searching for a diffray alternative, it’s crucial to consider factors like the level of customization offered, the ability to integrate seamlessly with existing tools, and the overall user experience. Look for solutions that provide actionable feedback, maintain context awareness of your codebase, and deliver a streamlined review process. This will ensure that your team can continue to focus on producing high-quality software without unnecessary interruptions.

OpenMark AI Alternatives

OpenMark AI is a leading developer tool for task-level benchmarking of large language models. It lets you test over 100 LLMs on your specific prompts, comparing real-world cost, speed, quality, and stability in one browser-based session. This is the go-to platform for teams who need data-driven confidence before launching an AI feature. Developers often explore alternatives for various reasons. Some might need a different pricing model or a self-hosted solution for stricter data governance. Others may seek tools with deeper integration into their existing CI/CD pipeline or require benchmarking for a niche set of models not covered elsewhere. When evaluating other options, focus on what matters for your workflow. Key considerations include whether the tool uses real API calls for accurate results, how it measures output consistency beyond a single run, and if it provides a holistic view of cost-efficiency—balancing price with actual performance for your task.