Blueberry vs Fallom

Side-by-side comparison to help you choose the right AI tool.

Blueberry

Blueberry is the all-in-one Mac app that unifies your editor, terminal, and browser for seamless web app development.

Last updated: February 28, 2026

See every LLM call in real time for effortless AI agent tracking, analysis, and compliance.

Last updated: February 28, 2026

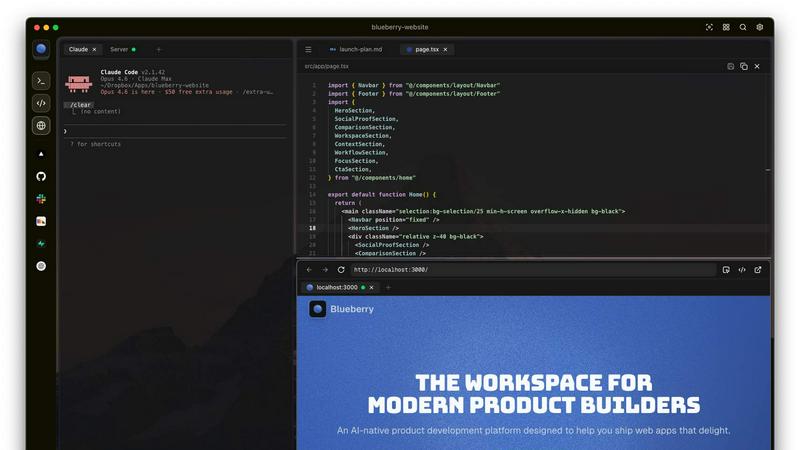

Visual Comparison

Blueberry

Fallom

Feature Comparison

Blueberry

Integrated Workspace

Blueberry combines a terminal, code editor, and preview browser into one cohesive environment. This integration eliminates the need for constant app-switching, allowing developers to focus on building and shipping their applications without distractions.

Live Context for AI Models

With Blueberry's MCP server, developers can run AI models directly in the terminal. These models have access to the entire workspace, including open files and terminal output, providing them with the context needed to understand and assist in code development effectively.

Advanced Code Editor

The built-in code editor offers full syntax highlighting, multi-cursor support, find and replace functionality, and Git integration. This robust feature set ensures that users can edit code efficiently while also providing AI models with real-time context for better assistance.

Flexible Preview Options

Blueberry allows developers to preview their applications on desktop, tablet, and mobile views. This feature ensures that developers can see how their users will experience the application, helping to catch any visual discrepancies before deployment.

Fallom

Real-Time LLM Call Tracing

See every interaction as it happens with a live, queryable trace table. Drill down into individual calls to inspect the exact prompt, model response, tool calls with arguments, token usage, latency, and per-call cost. This granular visibility is the foundation for debugging complex agent failures and understanding exactly what your AI is doing in production, turning opaque processes into transparent, actionable data.

Granular Cost Attribution & Analytics

Move beyond vague cloud bills. Fallom automatically breaks down your AI spend by model, user, team, session, or even specific customer. Visual dashboards show you exactly where every dollar is going—whether it's GPT-4o, Claude, or Gemini—enabling precise budgeting, showback/chargeback, and data-driven decisions to optimize for cost-performance without sacrificing quality.

Enterprise Compliance & Audit Trails

Built for regulated industries, Fallom provides immutable, complete audit trails of all AI activity. It logs inputs, outputs, model versions, and user consent, directly supporting requirements for GDPR, the EU AI Act, and SOC 2. Features like configurable privacy mode allow you to redact sensitive data while maintaining full telemetry, ensuring you can deploy AI with confidence.

Advanced Workflow Debugging Tools

Debug complex, multi-step agentic workflows with ease. The timing waterfall visualization breaks down latency across LLM calls and tool executions to pinpoint bottlenecks. Simultaneously, full tool call visibility lets you inspect every function call, its arguments, and returned results, making it simple to identify logic errors or external API failures in intricate chains.

Use Cases

Blueberry

Seamless Development

Developers can utilize Blueberry to create web applications without the hassle of managing multiple tools. With everything in one place, teams can collaborate more effectively, ensuring that everyone is on the same page throughout the development process.

Enhanced AI Collaboration

Product teams can leverage AI capabilities by running models like Codex or Claude directly in their workspace. This allows for instant feedback on coding queries or troubleshooting, improving efficiency and reducing the time spent on problem-solving.

Rapid Prototyping

With integrated preview options, designers and developers can quickly prototype and iterate on their web applications. This capability allows teams to gather user feedback faster, leading to better product outcomes.

Contextual Assistance

Blueberry's ability to provide AI models with full context means that developers can receive tailored assistance based on their specific project requirements. This feature reduces the need for manual context switching, allowing for a smoother workflow and better productivity.

Fallom

Optimizing AI Agent Performance & Reliability

Engineering teams use Fallom to monitor live AI agents handling customer support, data analysis, or booking tasks. By analyzing latency waterfalls and tool call success rates, they can quickly identify and fix performance bottlenecks, reduce error rates, and ensure a reliable user experience, leading to higher customer satisfaction and trust in their AI products.

Controlling and Forecasting AI Operational Costs

Finance and engineering leaders leverage Fallom's cost attribution dashboards to gain full transparency into unpredictable AI spending. They track costs per project, team, or feature, forecast budgets accurately, implement chargebacks, and identify opportunities to switch models for less expensive calls without impacting output quality, directly improving unit economics.

Ensuring Regulatory Compliance for AI Deployments

Legal and compliance teams in healthcare, finance, and enterprise software rely on Fallom to generate the necessary audit trails for AI governance. The platform logs all required data—prompts, responses, model versions, and user consent—providing a verifiable record to demonstrate adherence to GDPR, AI Act, and internal policy requirements during audits.

Improving AI Products with Data-Driven Insights

Product managers and developers use Fallom's session tracking and customer analytics to understand how users interact with AI features. They identify power users, analyze common query patterns, and A/B test different prompts or models using the integrated prompt store and traffic splitting, using real data to iterate and improve product offerings.

Overview

About Blueberry

Blueberry is an innovative macOS application designed for modern product builders who want to streamline their workflow by consolidating their editing, terminal, and browsing environments into a single focused workspace. Gone are the days of switching between numerous applications, losing valuable context and productivity. With Blueberry, developers can connect with AI models like Claude, Gemini, and Codex through its built-in MCP (Multi-Context Protocol) server, allowing for real-time interaction with code, terminal outputs, and live previews—all in one place. This integration significantly enhances the development process, enabling users to access and manipulate their files seamlessly. Ideal for software engineers, web developers, and product managers, Blueberry empowers teams to build and ship web applications efficiently, transforming how products are developed and launched. Join the community of pioneers who are already experiencing the benefits of this AI-native platform during its free beta phase.

About Fallom

Fallom is the AI-native observability platform that's taking the industry by storm, built from the ground up for the era of Large Language Models (LLMs) and autonomous agents. It solves the critical "black box" problem for engineering and product teams deploying AI in production. While traditional monitoring tools fall short, Fallom provides granular, end-to-end visibility into every single LLM call, tool invocation, and multi-step workflow. Imagine seeing a real-time dashboard of every AI interaction—prompts, outputs, tokens, latency, and exact costs—allowing you to instantly debug a failing agent, optimize a slow chain, or explain a cost spike. Trusted by fast-moving startups and global enterprises alike, Fallom is essential for anyone serious about building reliable, cost-effective, and compliant AI applications. Its unique value lies in unifying cost attribution, performance debugging, and compliance auditing into a single, OpenTelemetry-native platform that you can integrate in under five minutes, finally giving teams the control they need over their AI operations.

Frequently Asked Questions

Blueberry FAQ

What platforms does Blueberry support?

Blueberry is currently available exclusively for macOS users, providing a focused and optimized experience for Mac developers.

How does Blueberry's MCP feature work?

The Multi-Context Protocol (MCP) allows AI models to access and interact with your entire workspace, including files, terminal output, and browser previews. This ensures that the AI has the context needed to assist effectively.

Is Blueberry really free during its beta phase?

Yes, Blueberry is completely free to use during its beta phase, allowing users to explore all features without any financial commitment.

Can I integrate other tools with Blueberry?

Yes, Blueberry allows you to pin tools like GitHub, Linear, and Figma within the workspace. These integrations help maintain context and streamline your workflow even further.

Fallom FAQ

How quickly can I integrate Fallom into my existing application?

Integration is famously quick. With the single, OpenTelemetry-native SDK, most teams are sending their first traces and seeing data in the Fallom dashboard in under 5 minutes. There's no need to rip and replace your existing infrastructure; it layers seamlessly on top of your current LLM calls and agent frameworks.

Does Fallom support all major LLM providers and frameworks?

Absolutely. Fallom is provider-agnostic and works with every major provider, including OpenAI (GPT), Anthropic (Claude), Google (Gemini), Cohere, and open-source models. It also integrates with popular agent frameworks like LangChain and LlamaIndex. The OpenTelemetry foundation ensures zero vendor lock-in.

How does Fallom handle sensitive or private user data?

Fallom is built with enterprise-grade privacy controls. You can enable "Privacy Mode" to disable full content capture, logging only metadata like token counts and latency. For more granular control, configurable redaction rules allow you to strip specific PII or sensitive keywords, ensuring compliance with strict data handling policies.

Can I use Fallom to A/B test different models or prompts?

Yes, Fallom includes first-class support for experimentation. You can split traffic between different models (like GPT-4o and Claude 3.5) or different versions of prompts stored in the Prompt Store. The dashboard then lets you compare their performance, cost, and quality metrics side-by-side to make informed, data-driven deployment decisions.

Alternatives

Blueberry Alternatives

Blueberry is an innovative Mac app designed for developers, seamlessly integrating an editor, terminal, and browser into one focused workspace. This powerful tool allows users to connect various AI models, such as Claude and Codex, enabling a more efficient workflow by eliminating the need to switch between applications. With Blueberry, users can view files, terminal output, and live previews simultaneously, streamlining their coding and development processes. However, users often seek alternatives to Blueberry for various reasons, including pricing, specific feature sets, or compatibility with different platforms. When searching for an alternative, consider factors such as ease of use, integration capabilities, and the range of supported features that align with your workflow needs. Prioritize options that enhance productivity and provide a cohesive environment for coding and development tasks.

Fallom Alternatives

Fallom is a leading AI-native observability platform in the development category, built specifically for monitoring and managing LLM and AI agent workloads in production. It gives teams deep visibility into every prompt, response, and tool call, which is crucial for debugging and cost control. Users often explore alternatives for various reasons, such as budget constraints, the need for different feature sets, or integration with an existing tech stack. Some teams might prioritize simpler dashboards, while larger enterprises may require more extensive compliance frameworks or specific deployment options. When evaluating other solutions, focus on core capabilities: real-time tracing of LLM calls, detailed cost breakdowns, and robust compliance tools like audit trails. The ideal platform should integrate smoothly with your workflow, scale with your AI usage, and provide clear insights to optimize both performance and spending.